Artificial Intelligence Is Fueling an Arms Race Among Chip Makers

09 11월 2017 - 9:29PM

Dow Jones News

By Ted Greenwald

Chip makers are racing to develop artificial-intelligence

products to fuel growth as sales of personal computers and

smartphones cool.

Nvidia Corp., Intel Corp., Advanced Micro Designs Inc., and a

raft of startups are crafting new processors to tap into a broader

AI market that is growing 50% a year, according to International

Data Corp.

Growth has been elusive in some areas of the semiconductor

industry, contributing to a wave of consolidation capped this week

when networking specialist Broadcom Ltd. bid $105 billion for

smartphone-chip leader Qualcomm Inc. in what would the sector's

biggest-ever deal.

Global spending on AI-related hardware and software could expand

to $57.6 billion in 2021 from $12 billion this year, IDC estimates.

Of that, a sizeable portion will go into data centers, which by

2020 are expected to devote a quarter of capacity to AI-related

computations, IDC projects.

In recent years, certain AI techniques have become central to

the ability of, say, Amazon.com Inc.'s Echo smart-speaker to

understand spoken commands. They enable the Nest security camera

from Google parent Alphabet Inc. to distinguish familiar people

from strangers so it can send an alert. They also allow Facebook

Inc. to match social-media posts with ads most likely to interest

the person doing the posting.

The biggest internet companies -- Google and Facebook as well as

Amazon, International Business Machines Inc., Microsoft Corp., and

their Chinese counterparts -- are packing their data centers with

specialized hardware to accelerate the training of AI software to,

for instance, translate documents.

The online giants all are exploiting an AI approach known as

deep learning that allows software to find patterns in digital

files such as imagery, recordings and documents. It can take time

for such programs to discover meaningful patterns in training data.

Internet giants want to improve their algorithms without waiting

weeks to find out whether the training panned out.

The chip makers are vying to help them do it faster.

Much of Nvidia's 24-year history was spent making high-end

graphics chips for personal computers. Lately, its wares have

proven faster than conventional processors in training AI

software.

Nvidia, which reports third-quarter earnings Thursday, has seen

its sales to data centers nearly triple in the past 12 months to

roughly $1.4 billion, largely driving a nearly sevenfold increase

in the company's stock price to $212.03 over the past two

years.

The optimism around Nvidia "is what everyone in the space has

been experiencing," said Mike Henry, chief executive of AI-chip

startup Mythic Inc. He said an "explosion of interest" has yielded

$15 million in investments in Mythic from venture outfits including

prominent Silicon Valley firm DFJ.

Private investors have nearly doubled their total stake in AI

hardware to $252 billion this year, according to PitchBook Data

Inc.

Nvidia's chief rivals aren't standing still. Last year, Intel

bought Nervana Systems for an undisclosed sum. The chip giant is

working with Facebook and others to deliver Nervana-based chips,

intended to outdo Nvidia for AI calculations, by the end of the

year.

Beyond Nervana, Intel is prioritizing AI performance throughout

its data-center product line. Revenue in the divisions responsible

for server processors and programmable chips were up 7% and 10%,

respectively, from the prior year, Intel said in its third-quarter

earnings report last month.

"We don't disclose our total investments in these things, but

it's a large effort" on par with its work to lead the way in

conventional processing, said Naveen Rao, who leads Intel's AI

group.

Advanced Micro Designs, which competes with Nvidia for graphics

in gaming systems, recently shipped its own AI-focused graphics

processor line called Radeon Instinct. Baidu Inc. is a customer,

and AMD said in its most-recent earnings call that other cloud

providers are as well.

Some companies aren't waiting for the big chip vendors. Google

has designed its own AI accelerators, seeking an advantage by

tailoring silicon specifically for its software.

"This field is only getting started," said Ian Buck, Nvidia's

head of accelerated computing, "and it's being invented and

reinvented, it feels like, every quarter."

(END) Dow Jones Newswires

November 09, 2017 07:14 ET (12:14 GMT)

Copyright (c) 2017 Dow Jones & Company, Inc.

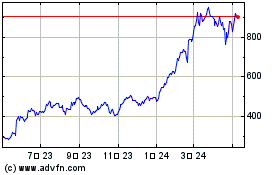

NVIDIA (NASDAQ:NVDA)

과거 데이터 주식 차트

부터 6월(6) 2024 으로 7월(7) 2024

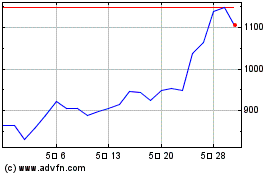

NVIDIA (NASDAQ:NVDA)

과거 데이터 주식 차트

부터 7월(7) 2023 으로 7월(7) 2024